The artificial intelligence industry just witnessed a watershed moment. On March 25, 2026, Google Research unveiled TurboQuant, a compression algorithm so efficient that within hours of its announcement, memory stock prices tumbled as investors scrambled to recalculate the future demand for AI hardware. This isn't just another incremental improvement—it's a fundamental shift in how we think about AI efficiency.

The Problem TurboQuant Solves: AI's Hidden Memory Crisis

Before we dive into what makes TurboQuant revolutionary, we need to understand the problem it solves. When you interact with a large language model like ChatGPT or Gemini, something invisible happens behind the scenes that costs companies billions of dollars: memory consumption.

Every conversation with an AI model requires what's called a key-value cache—a high-speed data store that holds context information so the model doesn't have to recompute it with every new token it generates. Think of it as the AI's working memory for your conversation. As conversations get longer and models get more powerful, this cache grows exponentially.

Here's the sobering reality: running a 70-billion-parameter large language model for 512 concurrent users can consume 512 GB of cache memory alone, nearly four times the memory needed for the model weights themselves. This isn't just a technical inconvenience—it's a fundamental bottleneck that limits how many users can simultaneously access AI services and how long their conversations can be.

What TurboQuant Actually Does

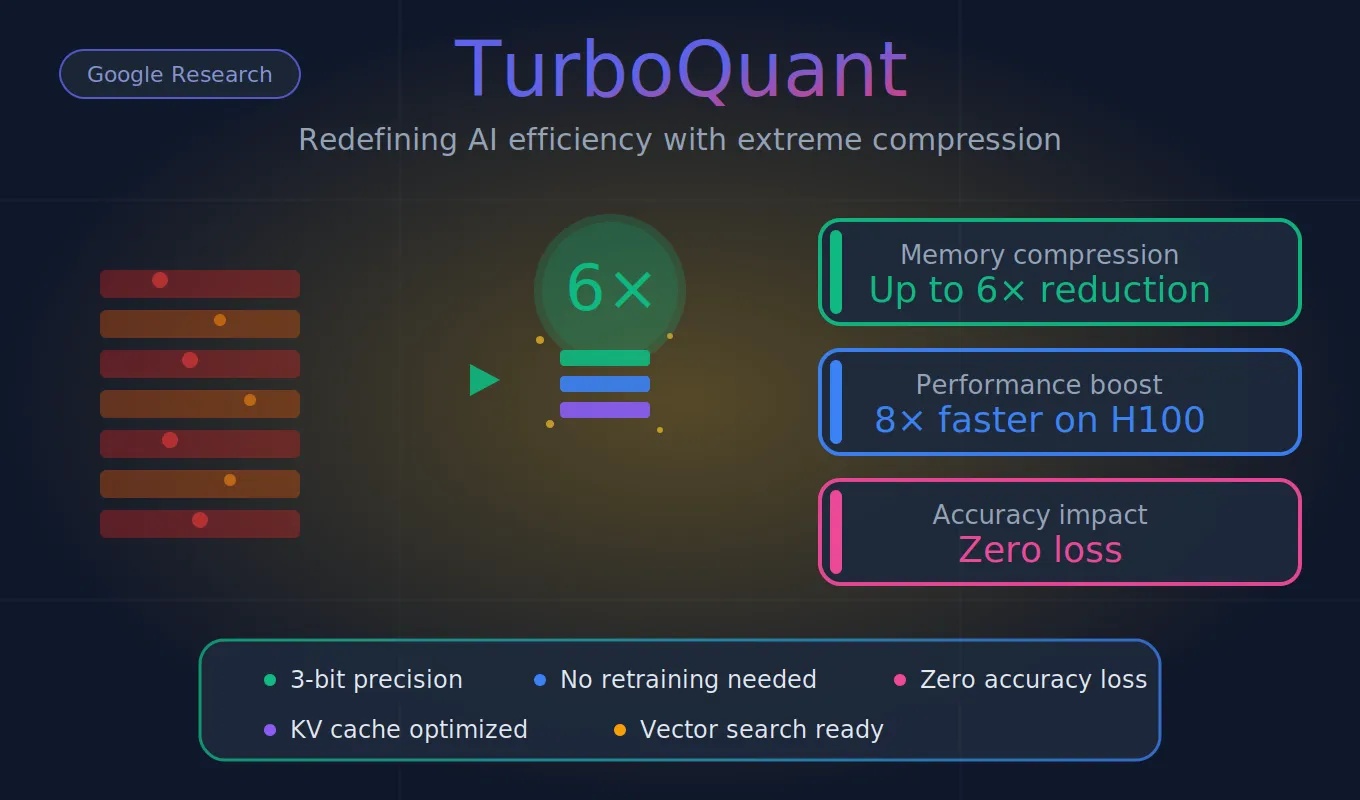

TurboQuant is a compression method that achieves a high reduction in model size with zero accuracy loss, making it ideal for supporting both key-value (KV) cache compression and vector search. But the devil—and the genius—is in the details.

Traditional compression methods face a paradoxical challenge: they reduce file sizes but introduce their own overhead. Traditional quantization methods reduce the size of data vectors but must store additional constants, normalization values that the system needs in order to decompress the data accurately. These constants typically add one or two extra bits per number, partially undoing the compression.

TurboQuant eliminates this overhead entirely through an ingenious two-stage process that represents a fundamental breakthrough in vector quantization.

The Technical Innovation: How TurboQuant Works

TurboQuant's architecture rests on two complementary algorithms that work in concert to achieve what was previously thought impossible: extreme compression with zero accuracy loss.

Stage 1: PolarQuant - The Foundation

The first stage employs a technique called PolarQuant, which fundamentally reimagines how we represent data. PolarQuant converts data vectors from standard Cartesian coordinates into polar coordinates. This separates each vector into a radius (representing magnitude) and a set of angles (representing direction). Because the angular distributions are predictable and concentrated, PolarQuant skips the expensive per-block normalization step that conventional quantizers require.

This isn't just a clever trick—it's a mathematically rigorous transformation that exploits the geometric properties of high-dimensional data. By rotating vectors randomly and then applying polar transformation, PolarQuant creates a simplified data geometry that's far easier to compress efficiently.

Stage 2: QJL - The Error Eliminator

The second stage is where TurboQuant truly shines. After PolarQuant handles the bulk of compression, a tiny residual error remains. TurboQuant uses a small, residual amount of compression power (just 1 bit) to apply the QJL algorithm to the tiny amount of error left over from the first stage. The QJL stage acts as a mathematical error-checker that eliminates bias, leading to a more accurate attention score.

QJL (Quantized Johnson-Lindenstrauss) isn't just cleaning up mistakes—it's ensuring that the compressed data maintains the mathematical properties that matter most for AI: accurate inner product calculations. This is crucial because AI models don't actually need perfect reconstruction of individual vectors; they need accurate similarity measurements between vectors.

The Real-World Impact: Numbers That Matter

When research papers get published, they often contain impressive-sounding numbers that don't translate to real-world improvements. TurboQuant is different. The performance metrics are staggering:

TurboQuant compressed KV caches to 3 bits per value without requiring model retraining or fine-tuning, and without measurable accuracy loss across question answering, code generation, and summarization tasks. Memory reduction reached at least 6x relative to uncompressed KV storage.

But memory compression is only half the story. Speed matters just as much, and here TurboQuant delivers again: On NVIDIA H100 GPUs, 4-bit TurboQuant delivered up to an 8x speedup in computing attention logits over 32-bit unquantized keys.

These aren't marginal gains—they're transformative improvements that could reshape AI economics entirely.

Beyond Language Models: Vector Search and Broader Applications

While TurboQuant's application to language models grabbed headlines, its impact extends far beyond chatbots. Google tested it against existing methods on the GloVe benchmark dataset and found it achieved superior recall ratios without requiring the large codebooks or dataset-specific tuning that competing approaches demand.

This matters because vector search underpins countless AI applications:

- Semantic similarity searches across billions of documents

- Recommendation systems for streaming platforms

- Advertising targeting systems

- Threat intelligence retrieval in cybersecurity

- Image and video similarity matching

Each of these applications faces the same memory bottleneck that TurboQuant addresses. The algorithm's ability to reduce index memory without sacrificing recall directly affects query throughput at scale.

The Market Reaction: Why Memory Stocks Dropped

The financial markets understood the implications immediately. Micron dropped 3 per cent, Western Digital lost 4.7 per cent, and SanDisk fell 5.7 per cent, as investors recalculated how much physical memory the AI industry might actually need.

This wasn't an overreaction—it was a rational response to a technology that could fundamentally alter demand curves. If AI companies can serve six times as many users with the same hardware, or handle six times longer conversations, the calculus around memory procurement changes dramatically.

However, context matters. Wells Fargo analyst Andrew Rocha noted that TurboQuant directly attacks the cost curve for memory in AI systems but also cautioned that the demand picture for AI memory remains strong, and that compression algorithms have existed for years without fundamentally altering procurement volumes.

The question isn't whether TurboQuant will eliminate demand for AI memory—it won't. Rather, it's whether these efficiency gains will slow growth in memory procurement or simply enable more ambitious AI deployments at roughly the same infrastructure cost.

Rigorous Testing: Benchmarks That Matter

Google didn't just publish a paper with theoretical claims—they put TurboQuant through comprehensive real-world testing. We rigorously evaluated all three algorithms across standard long-context benchmarks including: LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval using open-source LLMs (Gemma and Mistral).

The Needle in a Haystack benchmark is particularly telling. This test evaluates whether an AI model can find specific information buried within extremely long contexts—a task that becomes exponentially harder as compression increases. In the Needle-In-A-Haystack benchmark, TurboQuant matched full-precision performance up to 104k tokens under 4× compression.

Perfect retrieval with 4x compression isn't just good—it's proof that the compression method preserves exactly what matters for AI performance.

The Academic Pedigree: Why Credibility Matters

TurboQuant isn't vaporware or a hastily assembled proof-of-concept. It represents years of rigorous research across multiple papers and institutions. The paper, which will be presented at ICLR 2026, was authored by Amir Zandieh, a research scientist at Google, and Vahab Mirrokni, a vice president and Google Fellow, along with collaborators at Google DeepMind, KAIST, and New York University.

The work builds on a foundation of peer-reviewed research: QJL was published at AAAI 2025, and PolarQuant will be presented at AISTATS 2026. This multi-conference acceptance at top-tier venues signals that the research has survived intense scrutiny from the AI research community.

Implementation Reality: The Gap Between Paper and Production

Here's where things get interesting—and complex. Despite the groundbreaking nature of TurboQuant, Google hasn't released official implementation code yet. However, the AI developer community hasn't waited for permission.

Independent developers are already building working implementations from the paper alone, even though Google has not yet released any official code or integration libraries. Developers have created implementations in PyTorch, MLX for Apple Silicon, and even custom CUDA kernels.

One developer's experience is particularly validating: they built a custom implementation and tested it on a consumer GPU (RTX 4090), reporting character-identical output to the uncompressed baseline at 2-bit precision. This kind of community validation—independent teams achieving the claimed results—is powerful evidence that TurboQuant's promises are real.

The Competitive Landscape: How TurboQuant Compares

TurboQuant isn't the only compression method heading to ICLR 2026. Nvidia's KVTC achieves 20x compression with less than 1 percentage point accuracy penalty, tested on models from 1.5B to 70B parameters.

This creates an interesting comparison. KVTC achieves higher compression ratios but requires a calibration step that TurboQuant avoids. The two methods may serve different use cases: KVTC targets offline cache storage and reuse across conversation turns, while TurboQuant is designed for online, real-time quantization during inference.

The existence of competing approaches isn't a weakness—it's evidence of a healthy research ecosystem tackling one of AI's most pressing bottlenecks from multiple angles.

What This Means for AI Accessibility

The most profound impact of TurboQuant may not be on big tech companies with unlimited budgets—it may be on democratizing access to powerful AI.

Smaller companies and independent developers often can't afford the massive GPU clusters required to run state-of-the-art models. If TurboQuant delivers on its promise of 6x memory reduction, it means models that previously required expensive server-grade hardware could run on more modest setups.

This isn't just about cost savings—it's about who gets to participate in the AI revolution. When the infrastructure barriers drop, innovation becomes more distributed and diverse.

The Roadmap: What Happens Next

The community is watching several key milestones:

Q2 2026: Open-source code and framework integrations are expected. Major platforms like vLLM and Hugging Face are likely candidates for early adoption.

Q4 2026: Commercial products incorporating TurboQuant, probably starting with cloud providers looking to reduce infrastructure costs while improving service quality.

Beyond 2026: The same ideas can extend to image and video embedding compression. Combining with outlier-handling methods could push the field toward practical 2-bit systems.

Why Syncsup.cc Should Care

For platforms like Syncsup.cc that operate in the AI and technology space, TurboQuant represents more than just a technical advancement—it's a signal of where the industry is heading.

The shift toward extreme efficiency isn't just about cost optimization; it's about sustainability, accessibility, and the fundamental economics of AI deployment. Companies that understand and leverage these advances early will have significant competitive advantages.

As AI becomes more integrated into every aspect of technology, from search to recommendation systems to real-time applications, the efficiency innovations like TurboQuant will separate the leaders from the followers.

The Internet's Verdict: The "Pied Piper" Comparisons

The tech community immediately drew parallels to HBO's "Silicon Valley," where the fictional startup Pied Piper developed a revolutionary compression algorithm. If Google's AI researchers had a sense of humor, they would have called TurboQuant, the new, ultra-efficient AI memory compression algorithm announced Tuesday, "Pied Piper" — or, at least that's what the internet thinks.

The comparison is apt: both involve compression breakthroughs that seem almost too good to be true. The difference is that TurboQuant is real, rigorously tested, and already being replicated by independent developers.

Some industry leaders are calling it Google's "DeepSeek moment"—a reference to efficiency gains that prove there's still enormous room for optimization in AI systems.

Critical Analysis: The Limitations and Questions

No technology is perfect, and TurboQuant has limitations worth acknowledging. Poor random-seed handling could introduce small bias, though the paper argues the effect is negligible in high dimension. This is a technical caveat that matters for implementation but appears manageable based on testing.

More significantly, the 8x speedup claim deserves scrutiny. It's measured specifically on attention logit computation against a baseline, not on end-to-end inference throughput. The headline-grabbing "8x faster" applies to a specific component of the AI pipeline, not the entire system.

Additionally, TurboQuant's real-world impact depends entirely on adoption. Even the most brilliant research paper is just a PDF until someone integrates it into production systems that millions of users actually use.

The Broader Context: AI Efficiency as a Movement

TurboQuant arrives at a moment when the AI industry is being forced to confront the economics of inference. Training a model is a one-time cost, however enormous. Running it, serving millions of queries per day with acceptable latency and accuracy, is the recurring expense that determines whether AI products are financially viable at scale.

This shift in focus—from training efficiency to inference efficiency—represents a maturation of the AI industry. The race to build bigger models is giving way to a more nuanced competition around running models more efficiently.

Implications for Data Centers and Cloud Providers

The data center implications are staggering. Major cloud providers are planning hundreds of billions in AI infrastructure spending through 2026. A technology that reduces memory requirements by 6x doesn't reduce overall spending by 6x—memory is only one component—but it fundamentally alters the optimization equation.

Will companies reduce their hardware footprint and pocket the savings? Or will they maintain infrastructure spending but serve exponentially more users and enable longer context windows? The answer probably depends on competitive dynamics and market demand.

Security and Infrastructure Considerations

Security and AI infrastructure teams running large-scale semantic search or LLM inference pipelines carry direct exposure to the memory constraints these algorithms address. TurboQuant's data-oblivious nature—requiring no dataset-specific calibration—is particularly valuable for security-conscious deployments.

For threat intelligence systems, document similarity engines, and anomaly detection frameworks, the ability to compress indices without sacrificing recall directly translates to better performance at scale.

The Path to Production: What Needs to Happen

For TurboQuant to move from research breakthrough to production reality, several things must occur:

- Official Implementation: Google needs to release production-quality code that developers can actually use

- Framework Integration: Major frameworks like vLLM, TensorRT-LLM, and llama.cpp need to adopt and optimize the algorithm

- Real-World Validation: The technique needs to prove itself in production environments with diverse workloads

- Ecosystem Adoption: Cloud providers and AI labs need to integrate it into their serving infrastructure

The good news? All of these steps are already in motion. The community implementations prove the technique works. The question is timeline, not feasibility.

Conclusion: A Genuine Inflection Point

TurboQuant represents something rare in AI research: a genuine breakthrough that addresses a critical bottleneck with measurable, dramatic improvements and no apparent downsides. The combination of 6x memory reduction, 8x speedup on specific operations, and zero accuracy loss is unprecedented.

More importantly, it arrives at exactly the right moment. As AI systems push toward longer contexts, more complex reasoning, and broader deployment, memory constraints have become one of the primary limiting factors. TurboQuant directly attacks this constraint with a solution that's both theoretically elegant and practically implementable.

The research community has validated it through peer review at top conferences. Independent developers have validated it through working implementations. The financial markets have acknowledged its potential impact by immediately repricing memory stocks. And the tech community has embraced it with the kind of enthusiasm usually reserved for major product launches.

Whether you're an AI researcher, a developer building AI applications, an infrastructure engineer optimizing data centers, or simply someone who uses AI tools daily, TurboQuant matters. It's the kind of fundamental efficiency improvement that doesn't just make things marginally better—it changes what's possible.

For those tracking AI developments through platforms like Syncsup.cc, TurboQuant is a critical data point in understanding where the industry is headed. The future of AI won't just be about more powerful models—it will be about smarter, more efficient systems that deliver more capability with less infrastructure.

And that future is being written right now, one algorithm at a time.

Key Takeaways

- TurboQuant achieves 6x memory compression with zero accuracy loss through a two-stage process combining PolarQuant and QJL algorithms

- Delivers up to 8x speedup in attention computation on NVIDIA H100 GPUs

- Requires no model retraining, fine-tuning, or dataset-specific calibration

- Applications extend beyond language models to vector search, recommendation systems, and more

- Community developers are already building working implementations despite no official code release

- Financial markets immediately recognized the potential impact on AI infrastructure economics

- Will be formally presented at ICLR 2026, backed by peer-reviewed companion papers at AAAI 2025 and AISTATS 2026

Related Resources

- Official Google Research Blog: TurboQuant: Redefining AI efficiency with extreme compression

- ICLR 2026 Paper: Available through academic channels (formal presentation April 2026)

- Community Implementations: Various GitHub repositories with PyTorch, MLX, and CUDA implementations